The time has come for another end-of-year summary of my reading from the previous year. Here are links to my previous end-of-year reflections: 2013, 2014, 2015.

I’ve continued to keep track of my reading using Goodreads. My profile contains the full list and reviews of books I’ve read since 2010. Here is my full 2016 list.

2016 Goal

My 2016 goal was to read one or two biographies. It is a genre that I typically don’t read and I felt like branching out. I’m going to consider this goal achieved. I read Open (my review) by Andre Agassi (well, ghostwritten for him) and Medium Raw by Anthony Bourdain. Both have been tagged as memoirs so in the strictest sense maybe I shouldn’t count them towards my goal but I’m counting them. Open tells Andre Agassi’s life story and claims to have been fact checked so it is pretty close to a biography.

Of the two, I drastically preferred Open. I would not recommend Medium Raw unless you know you like Bourdain’s writing and the book description sounds interesting to you. Open has a broader appeal and tells an interesting story of a man’s conflict, struggle and success.

2016 Numbers

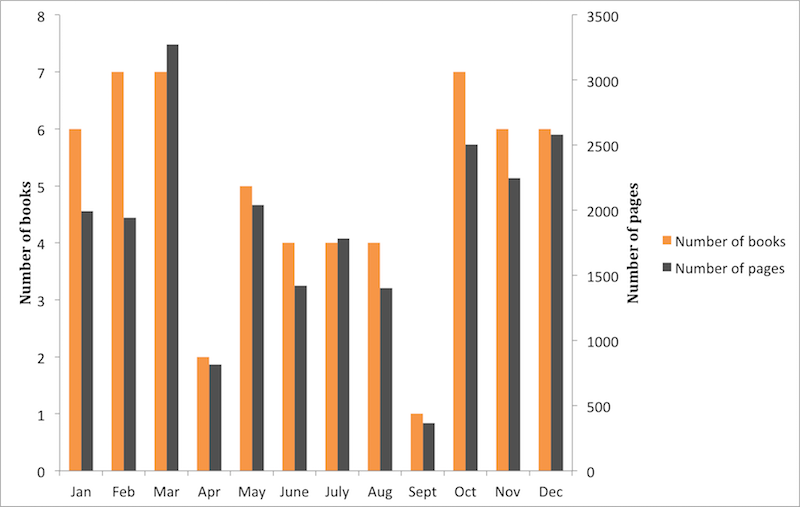

I read 59 books in 2016 for a total of 22,397 pages. I also read every issue of Amazon’s Day One weekly periodical (as I have every year since Day One started being published). Overall my rating distribution is pretty similar to 2015, with my three, four, and five star categories all containing two or three more books than the previous year.

Recommendations

I awarded twelve books a five star rating. I’ve listed them below in no particular order. The title links are affiliate links to Amazon and the review links to go my review on Goodreads.

- The Brain Audit: Why Customers Buy (And Why They Don’t) (my review)

- The Charisma Myth - Olivia Fox Cabane (my review)

- Lying - Sam Harris (my review)

- To Sell Is Human: The Surprising Truth About Moving Others - Daniel Pink (my review)

- Climbing Anchors - John Long and Bob Gains (my review)

- Creativity, Inc. - Ed Catmull and Amy Wallace (my review)

- Chasing the Scream - Johann Hari (my review)

- Deep Work - Cal Newport (my review)

- Seveneves - Neal Stephenson (my review)

- A Little Life - Hanya Yanagihara (my review)

- The Paper Menagerie and Other Stories - Ken Liu (my review)

- This Is How You Lose Her - Junot Díaz (my review1)

While I enjoyed all of the above books, a few stand out.

Chasing the Scream by Johann Hari

This book is about the war on drugs. It brings together studies, history, and anecdotes to present a compelling read on addiction and drug policy. I think it would be hard to finish this book and not be persuaded to vote for policies of drug decriminalization or legalization and more humane addiction treatments. The goodreads reviews of this book are generally extremely positive, with 61% of them being five stars. Go read the book’s summary and a few reviews and you’ll want to read this book.

Deep Work by Cal Newport

This book is game changer for those of us that have hobbies or work in fields where distraction-free concentration is beneficial or required. Cal Newport makes the argument that the ability to perform deep work is critical for mastering complicated information and producing great results. The book is split in to two parts. The first defines and makes the argument for deep work. The second prescribes rules for enabling yourself to perform more deep work. I highly recommend reading this book and implementing the recommendations2.

A Little Life by Hanya Yanagihara

This is a depressing and challenging book. As this review says, this book is about a terribly broken character, Jude, and his struggles. The writing is excellent. If you feel up to reading a long, difficult book that exposes you to some terrible experiences then read this book. I’m having a hard time saying this was my favorite fiction book from last year but it is the only five star fiction book I’m specifically calling out.

Moral Tribes by Joshua Greene

I didn’t give this book five stars but I’m still going to recommend Moral Tribes by Joshua Greene. This book explains how conflict arises when two moral groups meet and interact (an “Us” vs “Them” situation) and proposes Utilitarianism as a solution to this problem. While some parts of the book were a chore to finish, I’m glad I’ve read this book. My review highlights more of what I found interesting in this book.

There is a section of the book that presents both sides of the abortion debate which I’ve shown to friends on both side of the debate. All of them seemed to enjoy reading this small section of the book.

Series read this year

I finish almost every book I start reading. I read quick enough where I find it worthwhile to finish marginal books just in case they turn out good. With a book series the end of each book provides a checkpoint for me to reevaluate if I want to continue the series. While none of the below series earned five stars, I’m highlighting them because each series represents me making the choice to continue staying in author’s world.

I continued The Expanse series this year and read books 4-6. This is just great space opera and I’ll continue reading it until it stops being written.

I started and finished Don Winslow’s The Power of the Dog series. It tells the fictionalized tale of drug cartels in Mexico. Parts are brutal and violent and unfortunately often based on real events.

I read the three main books of Ann Leckie’s Imperial Radch. It is a neat science fiction story that deals with interesting topics. As a warning, I found the first book in the series to be the weakest. Keep pushing and read the second before giving up on this story.

Scott Meyer’s Magic 2.0 was a fun read in which the main character realizes he can edit a file and change reality. It is a silly premise and it delivers good, funny, lighthearted reading.

I also read Neil Gaiman’s American Gods and Anasazi Boys. I devoured both of these books.

Technical books read

I didn’t read many books on software in 2016. I read the lowest number of software related books since I’ve started keeping track. I finished Ben Rady’s Serverless Single Page Apps and Ron Jeffries' The Nature of Software Development. I also read most of Google’s Site Reliability Engineering but did not finish it before the end of 2016. I read more non-fiction books than in 2015 but I would have liked to see more software books in the mix.

More stats

There were definitely a couple months where my reading took a definite dip. I don’t remember what I was doing during April or September but apparently I wasn’t reading.

Unsurprisingly, ebooks continued to be my preferred format. This isn’t surprising and I expect that this trend continues. At this point the only reason I’ll even potentially look at this stat next year is because it forces me to go through and confirm each book had its format recorded correctly.

1 2 3 4 5 | |

My average rating was about the same as 2015.

1 2 3 4 5 6 7 8 | |

I read multiple books by quite a few authors. Below is a table summarizing my repeat

1 2 3 4 5 6 7 8 9 | |

2017 goals

This year I’m planning on revisiting some of my favorite books. I’m not going to set a concrete number for this goal and will just have to trust myself to honestly judge if I accomplish this goal.

- This review is currently blank on Goodreads because this was a book read for a book club I’m part of and we haven’t met to discuss the book yet. We typically don’t post reviews online of books we’ll be discussing. To any of my book club members that are reading this post, I apologize for spoiling the surprise of what star rating I’m planning on giving this book.↩

- I’m considering writing up more about this book and some changes I’ve made to help myself do more deep work.↩